The creation of 3D contents still remains one of the most crucial problems for the emerging applications such as 3D printing and Augmented Reality. In Augmented Reality, how to create virtual contents that seamlessly overlay with the real environment is a key problem for human-computer interaction and many subsequent applications. In this paper, we present a sketch-based interactive tool, which we term SweepCanvas, for rapid exploratory 3D modeling on top of an RGBD image. Our aim is to offer end-users a simple yet efficient way to quickly create 3D models on an image. We develop a novel sketch-based modeling interface, which takes a pair of user strokes as input and instantly generates a curved 3D surface by sweeping one stroke along the other. A key enabler of our system is an optimization procedure that extracts pairs of spatial planes from the context to position and sweep the strokes. We demonstrate the effectiveness and power of our modeling system on various RGB-D data sets and validate the use cases via a pilot study.

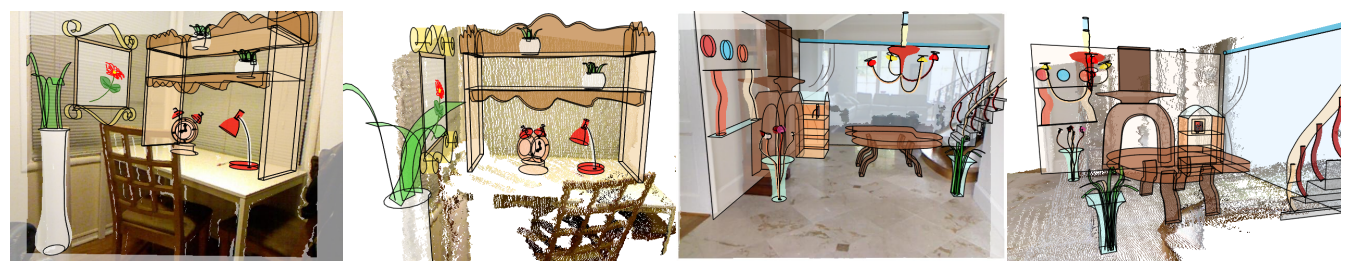

Our SweepCanvas system allows artists to quickly create 3D prototype models using a sketch-based interface on top of an RGB-D image as context. The left and right examples were created within 30 and 60 minutes, respectively, by a user with a short training period. Left and middle right are the original images overlaid with 3D models.